Inside Apple’s Quest to Fix the Uncanny Valley

If you are a Game of Thrones fan, you would know how difficult the CGI of dire wolves is compared to dragons. Wolves are real animals, so the CGI should incredibly realistic especially the furs, movements, and interaction with the environment.

These are complex to render, especially furs.

Since then, the studios have become really good at rendering furs and hairs. Part of the reason is that there are now powerful graphic cards and supercomputers to help them out.

This challenge, however, is to do the same but with the less computing power.

If you’ve ever seen a digital avatar that looked almost real but somehow made you irritated, you’ve visited the Uncanny Valley. It’s that eerie zone where a simulation is realistic enough to appear human, but there are certain characteristics which seems unnatural.

When Apple launched the Vision Pro, their “Persona” feature, which scans your face to create a digital twin for FaceTime, landed right in this valley. Early users looked stiff, ghostly, or strangely smooth.

Since its launch, Apple Vision Pro’s Persona feature has evolved, moving from “deeply weird” and uncanny initial reviews to significant improvements in realism with visionOS 26 and M5 chip updates. It captures more detail like skin texture, jewelry, and better side profiles, though some stiffness and uncanny valley effects remain.

Apple knows this, and it is trying to improve this further.

Recent patent filings reveal that Apple has been sitting on a technical blueprint to address this issue, and it doesn’t involve increasing raw power. Instead, it involves a surprisingly clever approach to computer graphics.

Why Digital Humans Look Like Plastic

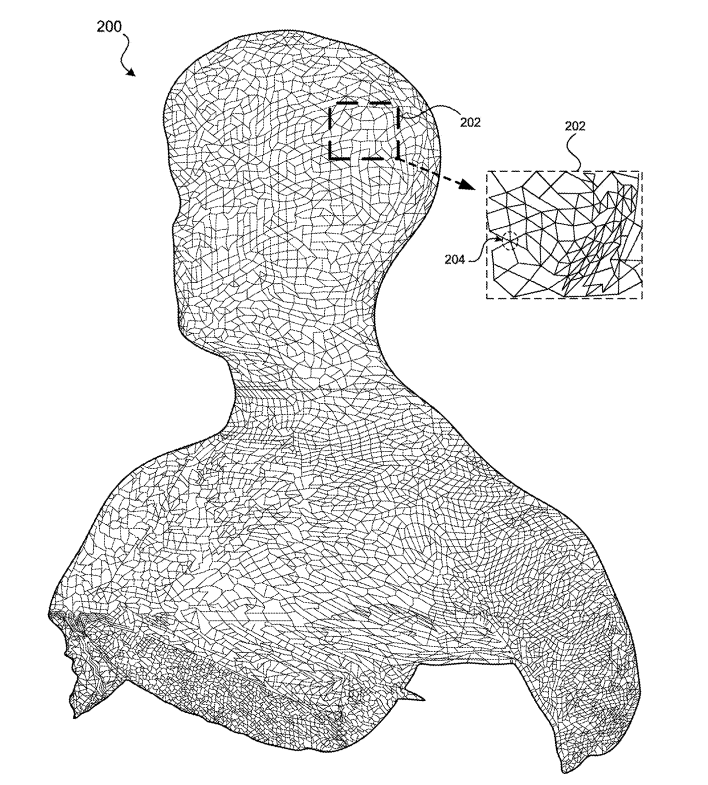

To a computer, a human face is just a math problem. For decades, the industry standard for solving this problem was the polygonal mesh. Imagine a wireframe net wrapped around your head. If you use enough tiny triangles, you can approximate the curve of a nose or a cheekbone perfectly.

But humans aren’t made of hard surfaces. We are soft, translucent, and covered in hair.

Hair is the nightmare of real-time rendering. A human head has over 100,000 strands, each interacting with light, casting shadows on each other, and moving independently.

If one tries to render hair using traditional polygons, hit two walls:

- The Helmet Effect: To save processing power, computers usually group hair into thick “clumps.” This makes your avatar look like it’s wearing a solid plastic wig (think LEGO hair).

- The Compute Wall: Rendering individual strands requires a supercomputer, not a battery-powered headset strapped to your face.

Now, for a device like Vision Pro, the issue is: How do you render photorealistic, volumetric hair on a mobile chip without melting the device?

The Solution: The “Hybrid Composite” Hack

Apple is not trying to find one tool to render the whole human. Instead, they treated the human body as a collage, stitching together three completely different rendering technologies into one image.

Apple’s system breaks down into three parts:

1. The Body

For your torso and clothes, the system uses a technique called Pixel-aligned Implicit Function (PIFu). Traditional scanning requires complex 3D cameras.

PIFu uses deep learning to “guess” the 3D shape based on a 2D image. It effectively “shrink-wraps” a texture around your torso.

Since a human’s chest and shoulders don’t require high-frequency details (like individual pores moving), this creates a solid, believable base with relatively low computing power.

2. The Face

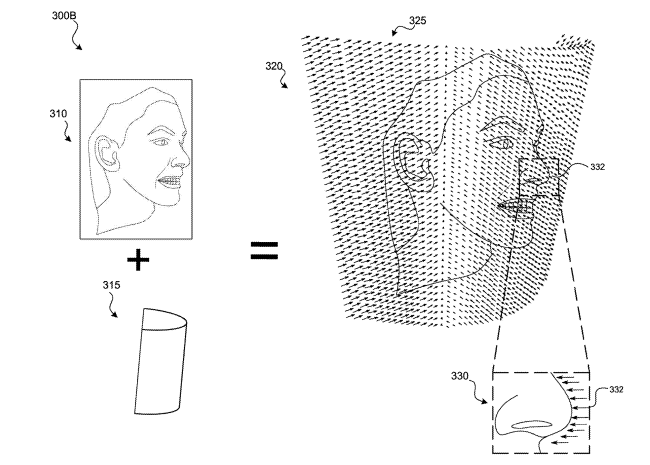

The facial movements such as smiling, squinting, or talking, should be fast with zero lag. A heavy 3D mesh is too slow to update in real-time.

The patent discusses a facial mapping technique using a CylinderDepth technique. The system doesn’t need to know the 3D coordinate of every pore; it just needs to know the depth of the pixels on that curved surface.

This is incredibly bandwidth-efficient, allowing facial expressions to transmit over FaceTime without stuttering.

3. The Hair

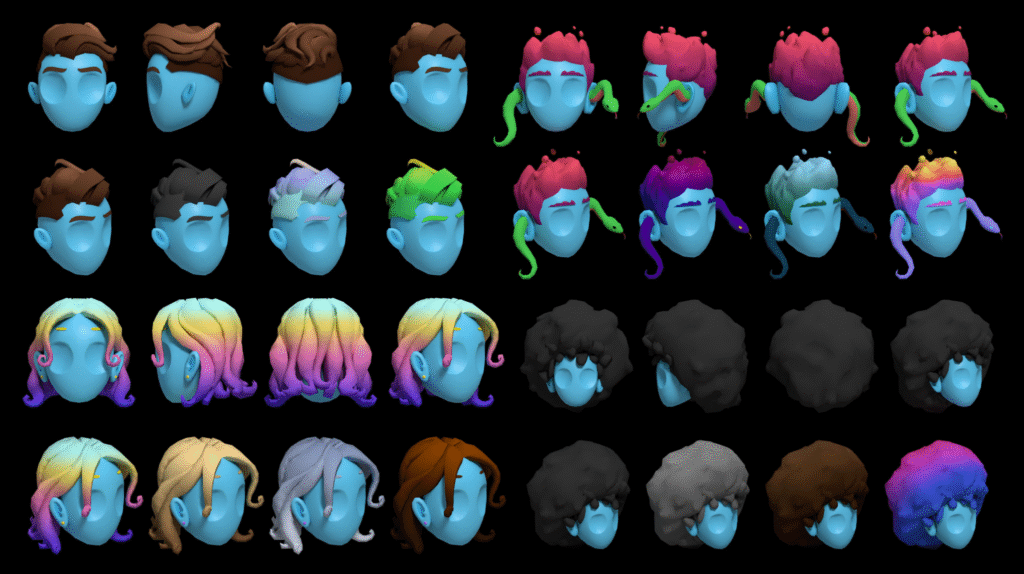

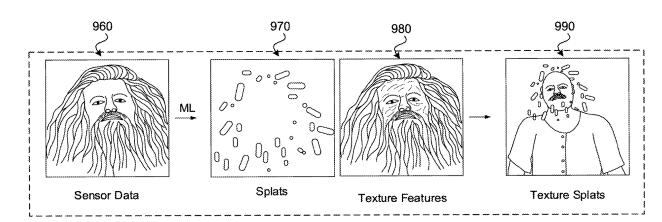

This is the real deal. Instead of using polygons (triangles) or strands (lines), the patent describes using Gaussian Splats (3DGS).

It is a cutting-edge 3D rendering technique that creates photorealistic, real-time traversable scenes from photos or videos.

Imagine a cloud of thousands of tiny, colored, translucent ellipsoids (blobs). Each blob has a color, a transparency level, and a direction it stretches. When you stack thousands of these fuzzy blobs together, they blend to create soft, volumetric shapes that look exactly like hair.

Because these “splats” don’t have hard edges like polygons, they handle the “fuzziness” of hair naturally. They are computationally cheap to render but look voluminous and soft to the human eye.

How Meta Quest is solving this issue?

Meta has been showing off their “Codec Avatars” for years, and admittedly, they look incredible. However, Meta’s approach typically relies on massive neural networks that, until recently, required a high-end gaming PC to run. They are trying to brute-force photorealism.

Apple’s approach is different. It is an exercise in constraint. They designed a system specifically for the M2 and R1 chips found in the Vision Pro.

By using low-data “maps” for the face and low-poly “splats” for the hair, they are gaming the system. They are prioritizing presence over absolute graphical perfection with less computing energy possible.

Conclusion

The patent reveals that Apple isn’t trying to simulate a human being atom by atom. They are trying to master the illusion of a perfect avatar. By stitching together a PIFu body, a Depth Map face, and Gaussian Splat hair, they are creating a system that can show even “Frankenstein” monster as a human.