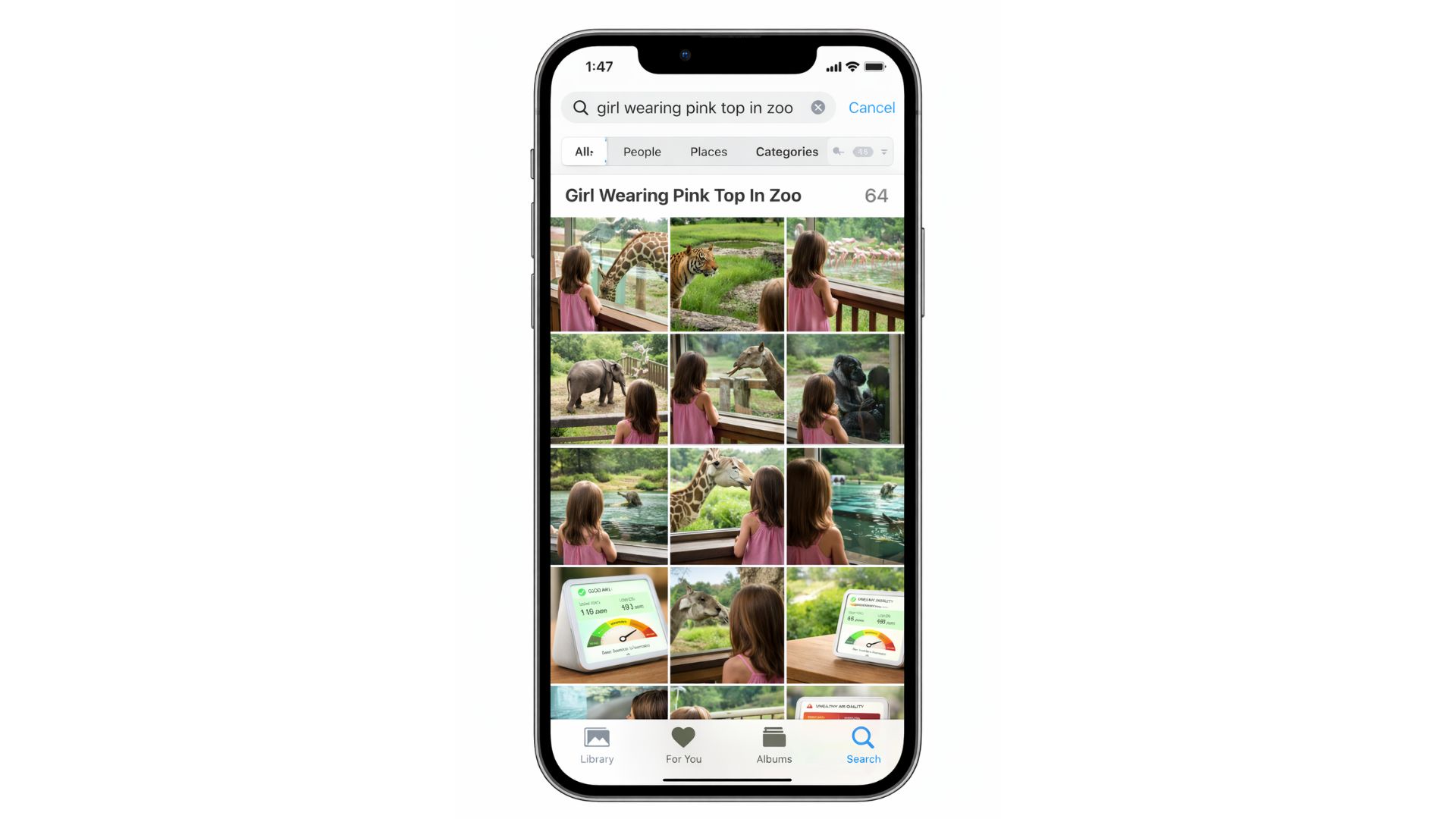

Think about the last time you tried to find a photo on your phone. You knew exactly what it looked like, your friend in a red shirt, or that trip to Paris, but scrolling through thousands of images felt endless. Typing a keyword didn’t help much either: the search brought up random results, some close, some completely off.

This everyday frustration is what Apple’s new patent sets out to solve. Instead of relying only on simple keywords or dates, Apple is building a smarter system that understands the meaning of your search and combines it with quick filters like time and location. In other words, it’s teaching your device to search the way you think, making it easier to find the memories and files that matter most.

Why Finding Files Is Harder Than It Should Be

One of the biggest frustrations with today’s devices is the sheer volume of files people store. Over time, smartphones and computers collect thousands of photos, videos, and documents, creating a digital library that is often unorganized.

Searching through this clutter to find one specific file can feel overwhelming and time-consuming. Another challenge lies in the limited metadata that files usually contain. Metadata often records only basic details such as the date or location of creation, but it doesn’t describe the actual content.

For example, a photo might tell you it was taken in June in Paris, but it won’t tell you that it shows your friend wearing a red shirt. Without descriptive detail, searches become less effective and often frustrating.

There is also the issue of keyword ambiguity. Words can have multiple meanings, and search systems often fail to understand context. For instance, searching for “orange cat” might return pictures of oranges, cats, or unrelated graphics because the system matches words literally rather than interpreting what the user actually meant.

How It Works

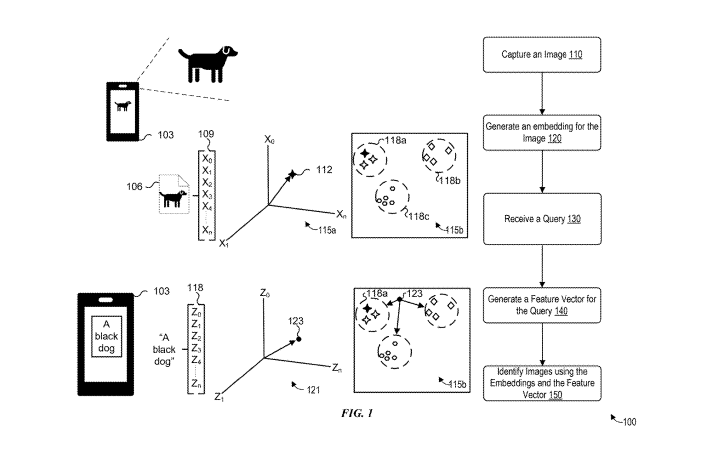

Apple’s new search system works by combining two smart steps: understanding meaning and using context. First, every file on your phone, whether it’s a photo, video, or document, gets a kind of digital fingerprint called an embedding. This fingerprint captures what’s inside the file, like colors, shapes, or words, so the phone can recognize it later.

When you type a search, the phone turns your words into a feature vector, which is just a mathematical version of your query. Then, a special model looks at your query and splits it into two parts. One part is about the meaning of who or what is in the file, what they’re doing, or how they look. The other part is about contextual things like the time or place.

The phone first uses the meaning part to find files that match your description. For example, if you search “Joey wearing a red shirt,” it will find all photos of Joey in red shirts. Then it uses the context to narrow those results. If you add “during June,” it will filter the photos to only those taken in June.

Finally, the phone shows you the exact files that match both meaning and context. This way, you don’t have to scroll endlessly or remember exact dates—you just describe the memory, and the phone finds it for you.

How it helps Apple Users

Apple’s new search method could make a big difference in how people find their files. Right now, keyword searches often bring up results that don’t really match what you’re looking for, because they only match words without understanding their meaning.

Apple’s system looks at the meaning behind your search, so results are more accurate. It also works more efficiently by splitting the search into two parts: one part focuses on the meaning of the words, and the other part checks details like dates and locations.

This saves memory and processing power, which is especially useful on phones. Another big advantage is that the system isn’t just for photos. It could also work with videos, audio recordings, documents, and spreadsheets.

In short, this technology could make searching across all kinds of files faster, easier, and much more reliable. By using semantic search, the system can distinguish between different meanings of words (like “orange” the color vs. “orange” the fruit), leading to much more accurate results.

Efficiency: Semantic searches are powerful but can be “computationally demanding”. By stripping out dates and locations and using them as simple filters at the end, Apple makes the process much faster and easier on your device’s battery.

Beyond Just Photos: While the patent focuses heavily on image files, Apple notes that these techniques could also be applied to audio, video, word processing, and spreadsheet files.

The Bigger Picture

Apple’s new patent represents a larger shift in how technology is evolving to handle the overwhelming amount of digital information people create every day.

The innovation blends AI-powered semantic understanding with traditional metadata, creating a system that can interpret the meaning behind a user’s query while also using concrete details like dates and locations to refine results.

This dual approach makes digital life more manageable by reducing the frustration of endless scrolling or vague keyword searches. If Apple brings this technology into everyday apps such as Photos, Files, or Spotlight, users could experience a far more intuitive way of finding what they need.

Instead of remembering exact dates or file names, people could simply describe what they’re looking for in natural language, and the system would deliver precise matches. In essence, Apple is pointing toward a future where searching across devices feels less like digging through clutter and more like having a smart assistant who understands both context and detail.