Audio accessories like earphones, headphones, or earbuds have stopped being mere music players. Over the last few years companies have pushed them into tiny, high-tech devices that do more than stream sound. They track health, cancel noise, and even add ultra-wideband (UWB) positioning and other smart features.

Samsung’s UWB earbuds, for example, added precise spatial awareness to the wearable audio category. Other vendors have focused on better active noise cancellation, bone-conduction voice pickup, or biometric sensing inside the ear.

These advances are largely incremental as each new solution refines battery life, comfort, or communication.

But many thorny problems remain: reliably understanding speech in noisy places, preserving privacy, and capturing quiet or whispered commands without bulky external microphones.

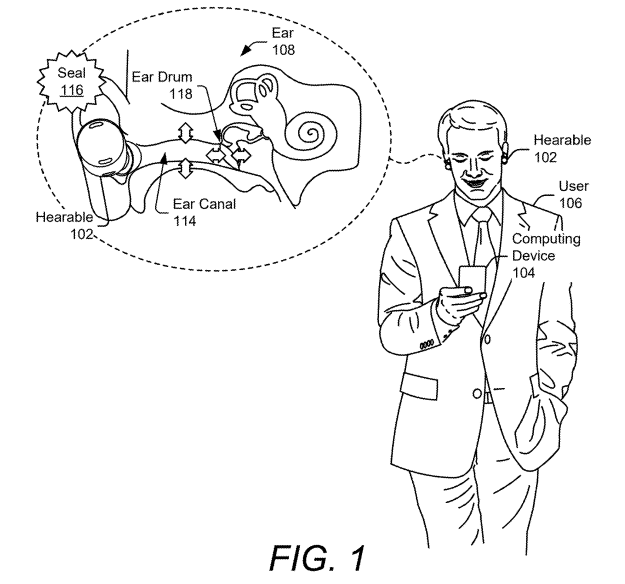

Google’s new patent attacks one of those remaining problems by moving the sensing from the open air into the ear canal itself. The patent shows an approach that listens to the physical effects of speech inside the ear, rather than depending only on sound waves traveling through the room.

The Challenges

Modern voice assistants depend mostly on microphones that pick up sound traveling through the air. That works fine in quiet locations, but everyday life is rarely quiet. Three common problems make microphone-based voice recognition fragile:

- Background noise: When a user is in a café, on a bus, or near music, the microphone captures a mix of the user’s voice and everything else. Separating the two is difficult and error-prone.

- Occlusion and sealing: Many earbuds sit snugly in the ear canal. That seal improves sound quality and noise cancellation, but it also changes how— and how well—airborne sound reaches the microphone.

- Privacy and leakage: Speaking aloud can be overheard by others. Users sometimes want to give commands quietly or prevent their speech from being audible to people nearby.

Simply put, microphones often hear too much (noise) or too little (when sound is blocked), and they expose speech to everyone around.

The patent highlights these limits and frames the problem as one of finding a more reliable, private way to sense what a person says while wearing an ear device.

Google’s Solution: Listening from Inside the Ear

Instead of trying to hear a person’s voice through the air, Google’s patent suggests something very different: listening to what happens inside the ear when a person speaks.

When a person talks, it is not only sound that moves. The jaw shifts, the throat vibrates, and tiny pressure changes happen inside the ear canal. These movements slightly change the shape and space inside the ear. Even though people cannot feel these changes, they are always there.

Google’s idea is to use these tiny changes as clues to understand speech.

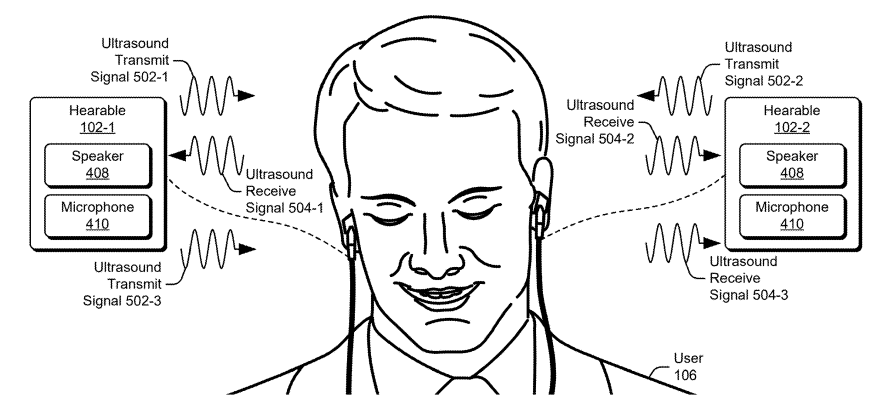

The earbud sends out a very soft, very high-frequency (ultrasonic signal) sound into the ear. Since it very-high frequency, humans cannot hear it.

It works a bit like sonar, like how bats or submarines use sound to “see” their surroundings. The sound goes in, hits the walls of the ear canal, and comes back.

When a person starts talking, the inside of the ear changes slightly. The walls move, air pressure changes, and nearby muscles vibrate.

Because of this, the silent sound sent by the earbud also changes when it comes back.

It is similar to throwing a ball at a wall. If the wall is still, the ball comes back the same way every time. If the wall moves, the ball comes back differently. In the same way, speech makes the “echo” inside the ear change.

The earbud listens carefully to these changes.

The earbud and phone then look at how the returned signal moves over time. They turn it into simple patterns, like pictures that show movement and rhythm.

These patterns are not normal sound recordings. They are more like fingerprints of how the ear behaved while the person was speaking.

Over time, Google’s system learns that certain patterns usually mean certain words.

For example:

- One pattern may often appear when someone says “Hey Google.”

- Another pattern may appear when someone says “Call Mom.”

Using artificial intelligence, the system learns these links, just like voice assistants already learn from microphone recordings.

But here, it learns from ear movement instead of air sound.

Once the system recognizes the pattern, it understands what the person said and sends the command to the phone, assistant, or app.

Why This Method Works Better in Daily Life

This approach has some strong everyday advantages.

First, it ignores most background noise. Traffic, music, and people talking nearby happen outside the ear. Since this system focuses on what happens inside the ear, those noises matter much less.

Second, it improves privacy. The silent signal cannot be heard by others. People nearby cannot easily listen in. A person could give commands quietly without speaking loudly in public.

Third, it works even when someone whispers. Because it looks at internal movement, not just loudness, it can detect speech even when the voice is very soft.

In simple terms, Google is teaching these devices to “feel” speech from inside the body, instead of just “hearing” it from outside.

Similar solutions in market and research

The idea follows a broader research direction known as “silent speech” or non-airborne speech sensing. Academic work has explored many approaches:

- Bone conduction and throat mics capture vibrations through bone or skin. They are robust to external noise but typically require different placement (neck or jaw) and can be uncomfortable.

- EMG (electromyography) and facial sensors read muscle activity directly; these can be accurate but need electrodes on the face or neck.

- Ultrasound and pressure sensors near the mouth or inside the ear have appeared in research prototypes and some patents; they show the same principle that internal mechanical changes carry speech information.

Various companies (including Apple, Samsung, and others) have patents or prototypes that touch on in-ear sensing or bone conduction capture, but most commercial solutions focus on single aspects (playback, health metrics, or simple voice pickup).

Google patent represents a pragmatic and consumer-ready direction. Rather than radically new physics, it shifts the sensing location and exploits what the body already does when a person speaks.

That shift could make voice interfaces more private, reliable, and useful in daily life.

It builds on existing “silent speech” research and on incremental earbud innovations (UWB positioning, better ANC, biometric sensing), but it stitches those threads into a cohesive system designed for mainstream wearables.