Inside Google’s “Dreaming” Patent: Turning Imagined Things Into Real Search Results

Imagine you are planning a trip/date/party. You can almost see the place in your head: a quiet coastal town with pastel houses, narrow stone streets, and a cafe with a gorgeous sunset view. You know the feeling you want. You just don’t know the name of a place that fits.

So you open Google and start typing. “Small European coastal town with colorful houses.” The results are a mix of travel blogs, stock photos, listicles, and ads. If lucky, you can find a place but not every time. So, you try again. And, after twenty minutes, you give up and ask a friend or people.

Google has been thinking about this problem, and a patent application gives us a detailed look at one possible answer. The idea, in plain terms: what if you could ask an AI to draw the place you are imagining, and then let Google search the real world for places that look like the drawing?

The patent is titled “Location Search Based on Model-Generated Synthetic Images.” It lays out a vision for how generative AI, visual search, and Google Maps might come together into a single new way of finding things in the world.

This article walks through what the patent actually says, how the proposed system would work, what it could be used for beyond travel, and the bigger questions it raises. It is not a product announcement, and Google has not confirmed that any of this will ship as described. But patents are one of the clearest windows we get into how big technology companies are thinking, and this one is unusually detailed.

What is the problem Google is trying to solve?

The patent points out that travel websites in particular use popular keywords to attract clicks without actually matching what the searcher wanted. It also notes that traditional travel planning tools tend to be restrictive. They assume you already know where you want to go, or they limit you to a small set of pre-defined destinations. None of them help you when your starting point is just a vague mental image.

The document calls this “the gap between imagination and reality in the travel planning process.” The same gap shows up in shopping, in food, in fashion, and in entertainment. Words alone are often not enough to express what you want. And reference photos are often not available, especially when the thing you want does not yet exist in any single real place.

Google’s proposed answer is to use AI image generation to close that gap. Instead of forcing you to describe your idea in words and hope the search engine guesses correctly, the system would let you generate a picture of your idea first, and then use that picture as the actual search query.

How the system would work

The basic flow described in the patent has four steps.

Step one: you tell the system what you are imagining. This can happen in several ways. You can type a freeform description into a text box, the way you would type a normal search. You can tap a series of suggested buttons or chips to build up a description piece by piece. You can speak to it. You can upload a photo for it to riff on. Or you can combine these methods.

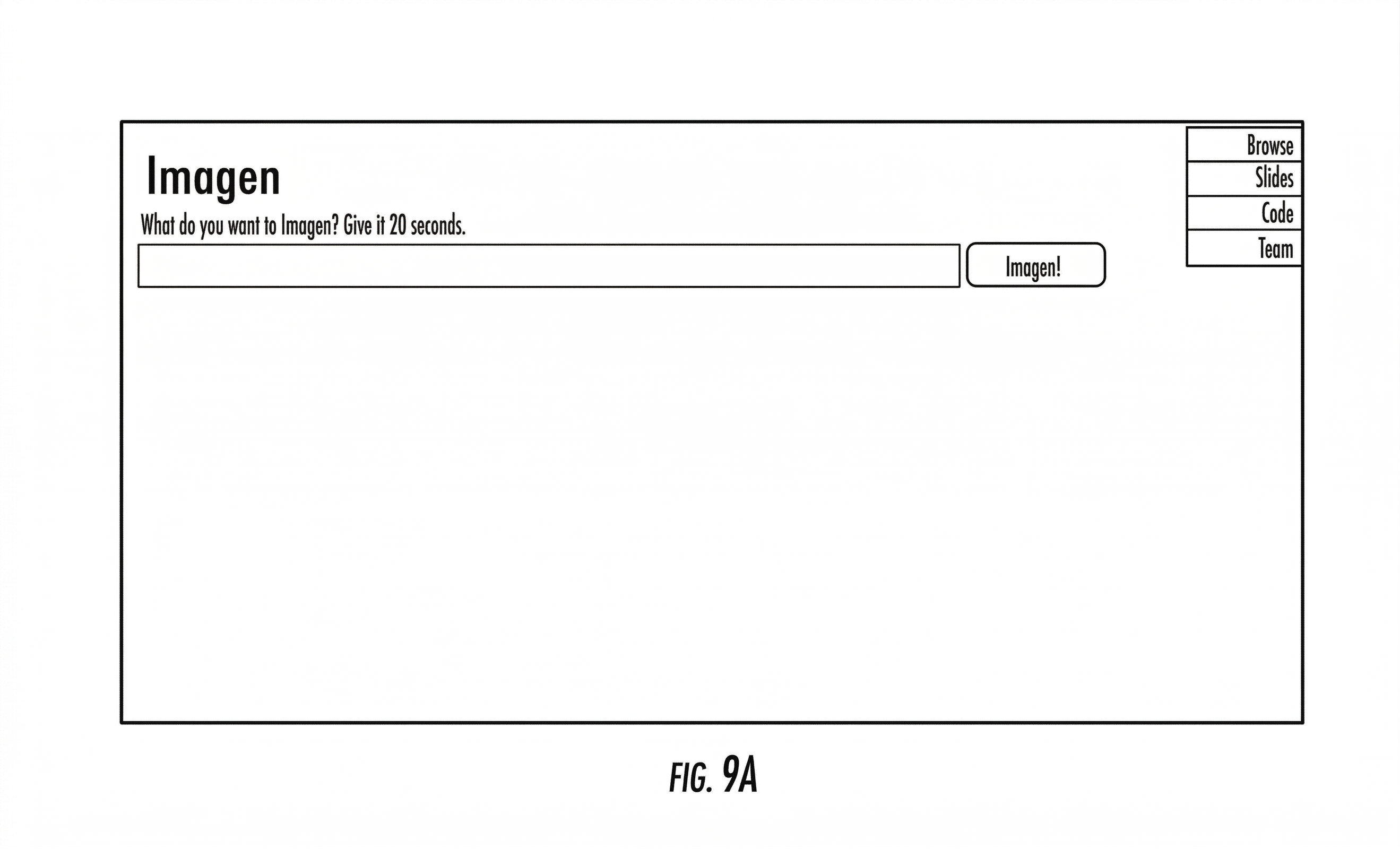

Step two: an AI image model draws what you described. The patent specifically mentions diffusion models, which are the same kind of AI system behind tools like Google’s Imagen, OpenAI’s DALL-E, and Midjourney.

Google however especially shows Imagen so, chances are it will use that model to create images of places.

These models have been trained on huge collections of images and can generate new pictures from text descriptions. The system in the patent would generate not just one image but several candidates, so you can scroll through and pick the one that best matches what you had in mind.

Step three: Google searches the real world for places that look like the image. This is where the system connects to Google’s existing strengths. Google already runs a powerful visual search tool called Google Lens, which can take a photo and find similar images on the web or identify the objects in it. The patent proposes using the same kind of visual matching, but pointed at databases of real-world locations, businesses, products, and listings. The AI-generated image becomes the search query. The output is a list of real places, real restaurants, or real products that visually resemble it.

Step four: you get results you can act on. The patent describes results being shown on a map, with addresses, ratings, contact information, directions, travel options, and in some cases an automatically generated itinerary if you are planning a multi-stop trip. For shopping, the results would include prices and links to buy. For restaurants, they would include menus and reservation options.

The whole loop is designed to feel less like a search engine and more like a creative tool that ends in a real-world destination.

Although the patent’s title focuses on location search, the use cases described inside are much broader. The use cases of this feature applied to other areas such as food dishes, fashion and apparels, Art and music, etc.

The “Dreaming” Feature

One of the most interesting parts of the patent is how often it imagines this feature being available. The interface concept is called by various names in the figures, but the recurring label is “Dream it” or “Start dreaming.” And the patent suggests this button could appear almost everywhere Google has a presence.

The figures show “Dreaming” process inside Google Lens, inside Google Search results pages, inside Google Photos, inside knowledge panels, inside shopping results, and even inside video players.

In one example, a user is watching a music video, sees an outfit they like, and taps a “Dream it” button below the video to create a generated image of a similar outfit and then search for clothes that look like it. In another example, a user is looking at a restaurant in a search result and uses “Dream it” to imagine a specific dish they want, then finds nearby restaurants that serve something similar.

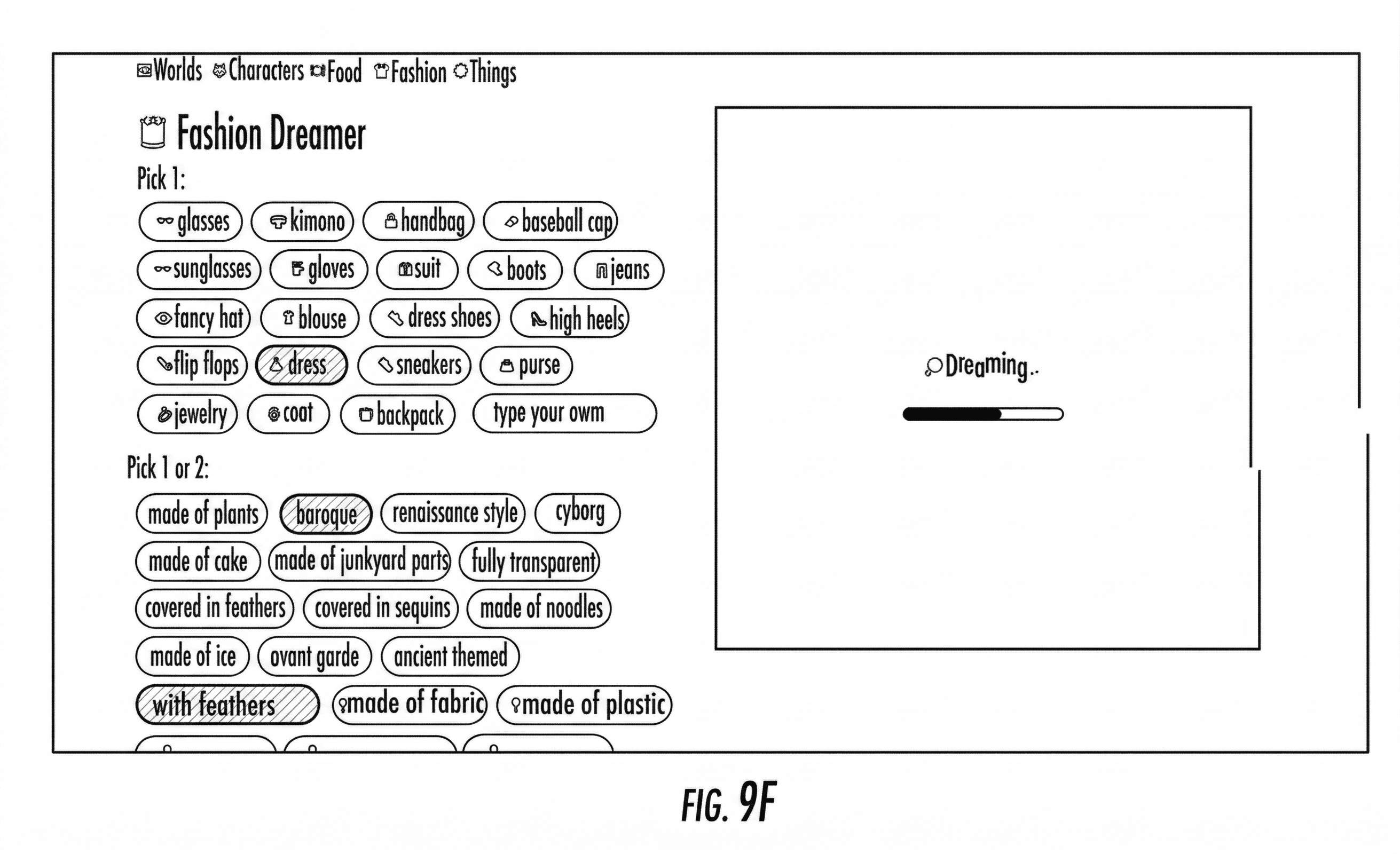

The patent also describes dedicated creative interfaces with names like “World Dreamer,” “Fashion Dreamer,” and “Food Dreamer.” These would let users build up a description by tapping chips.

For a fashion search you might tap “dress,” then “baroque,” then “with feathers.” For a world search you might tap “castle,” then “steampunk,” then “made of plants.” Each tap refines the prompt being sent to the image generator. A preview window shows the generated image updating as you make choices.

One figure shows a generated dress on the left side of the screen and a panel of real-world product matches on the right, with prices, retailer names, and links. Another figure shows the user cropping a portion of the generated image — for example, just the sleeves of a jacket — to refine the search to that specific element.

The point of all this detail is that Google is not just describing a single new feature. It is describing a way of searching that could be woven into nearly every product the company makes.

The personal touch

So far the system sounds like a clever combination of three things Google already has: an image generator, a visual search engine, and a database of real-world places. But the patent adds a fourth element that deserves attention, because it is the part that would make the experience feel different for each person.

The patent describes something called a “task graph” and a closely related concept called a “taste graph.” These are technical names for what amounts to a learned map of your interests and preferences. The system would build this map from signals like your location history, your past searches, your purchase history, your browsing activity, your social media activity, your ratings, and the people you follow.

This personalized profile would feed into the image generation step. In other words, two different people typing the exact same prompt would not necessarily get the exact same generated image.

The AI would tilt its drawings toward each user’s known tastes. Someone whose past behavior suggests they like minimalist Scandinavian design would see a different “cozy living room” than someone whose history points toward maximalist Victorian decor. And the real-world matches that come back at the end of the process would also be filtered through the same preference profile.

The patent presents this as a benefit. It is, in a sense, the difference between a generic search engine and a friend who knows you. But it also concentrates a lot of personal information into a single system, and it raises questions worth thinking about — questions we will return to later.

What is actually under the hood

The patent goes into considerable technical depth, but the high-level picture is not hard to follow.

The image generator is described as a diffusion model, the same general type of AI as Google’s own Imagen system, which the patent name-checks directly. Diffusion models work by gradually turning random noise into a coherent picture, guided by a text description. They are the current state of the art for turning words into images.

The visual search step relies on what the patent calls “image embeddings.” This is a way of converting an image into a long list of numbers that captures its visual features. Once both the generated image and the real-world images in Google’s databases have been converted into these number lists, the system can quickly find which real images are mathematically closest to the generated one. This is roughly how Google Lens already works.

The patent also describes a quality-control step. Before showing generated images to the user, the system would score each candidate for “realism and hallucinations” — that is, how plausible the image looks and whether it contains obvious AI mistakes. Only the better candidates would be shown.

Finally, the patent mentions running some of the lighter parts of the system on a user’s device, while heavier image generation would happen on Google’s servers. This is consistent with how most cloud AI products work today.

The technical details matter less than the overall shape. What Google is patenting is not a single new model but a particular combination of existing pieces, plus the user interface that ties them together.

Why this matters

Step back from the technical details and you can see several reasons this patent is worth paying attention to.

Maps could become a discovery product, not just a directory. Today, Google Maps is mostly a tool for finding places you already know exist. You search for a restaurant by name, or for a category like “coffee shops near me.” The system in the patent would let Maps surface places you had no way to name in advance. That is a meaningful shift in what a map application is for.

It is a response to how younger users actually search. A growing share of younger people now turn to Instagram, TikTok, and Pinterest for travel ideas, shopping inspiration, and restaurant recommendations. These platforms are visual and inspiration-driven in a way that traditional text search is not. A “Dream it” button across Google’s products is, among other things, an attempt to bring inspiration-driven discovery back onto Google’s surfaces.

It connects several Google products that have been somewhat separate. Google Lens, Google Maps, Google Shopping, Google Search, and Google’s image generation research have largely been developed as distinct projects. This patent describes a workflow that uses all of them at once. Whether or not the specific “Dream it” branding ever ships, the underlying integration is a direction Google appears to be heading.

The monetization paths are clear. Shopping results with prices and buy links, restaurant results with reservation buttons, hotel results with booking flows — these are all places where Google already makes money. Pointing a generative search workflow at them is a natural extension.

The argument about efficiency cuts both ways. The patent argues that letting users generate an image first actually reduces wasted searches, because people spend less time refining bad text queries. That might be true. But generating images is far more computationally expensive than returning a list of links. Whether the overall system ends up using more or less computing power than traditional search is an open question.